Of the more than $4 trillion the world expends on health care each year, close to 60 percent is spent on the clinical workforce—the doctors, nurses, pharmacists, and other professionals who provide patient care. Yet few health systems estimate their future workforce needs accurately or are able to develop a strategy for their clinical workforce that effectively keeps supply and demand near equilibrium.

The resulting supply/demand imbalances impair patient care, demoralize clinicians, and make service delivery inefficient. As a consequence, they compromise a health system’s ability to deliver its service strategy—its goals for providing high-quality, accessible, cost-effective care. Those goals can be met only if the health system has the right number of the right clinicians in the right places.

At the heart of the problem lies what we have begun to call the workforce planning paradox: because education and training cycles in health care are often quite long, traditional supply/demand signals are frequently muffled or ineffective. The consequences of planning decisions can take many years to become clear, by which time the planners are rarely still in their posts. Furthermore, the evidence base required for good planning is difficult to gather and codify.

Our work in a wide range of areas, including the Pacific Rim, the Middle East, Europe, and North America, has enabled us to identify several steps health systems can take to develop an effective workforce strategy. First, they need to improve their ability to predict the demand for clinicians and match supply to it. But as crucial as this step is, the systems must also accept that they will never be able to forecast health care needs perfectly. Thus, they must also monitor the labor market on an ongoing basis and introduce greater flexibility into their workforces. Furthermore, they must allocate the risk associated with increased flexibility appropriately and establish clear accountability for workforce management.

The approach each health system uses to implement these actions will depend on a number of variables, including its service strategy, level of central control, and cultural norms (the system’s implicit social contract, for example). But a health system that does not take these steps will find itself unable to manage almost two-thirds of its spending effectively.

Consequences of supply/demand imbalances

Mismatches between clinician supply and demand are causing problems in health systems around the world. Australia, for example, is currently facing a severe clinician shortage as a result of two factors: tight collegiate admission practices and reliance on state-based workforce planning, rather than national coordination and decision making. The shortage, which is particularly pronounced in rural areas, has forced Australia to greatly increase its use of foreign-trained clinicians, who now make up 25 percent of the workforce (compared with 19 percent a decade ago). In addition, the shortage has impaired quality and access levels in underresourced facilities and has marginalized some health services. Growing public concern about the clinician shortage has created a political imperative to increase by half the funded undergraduate medical school placements over the coming decade; nursing school placements will also be enlarged.

In contrast, the United Kingdom discovered not long ago that it had more medical students than it was able to accommodate, another result of a decade-old central planning decision. In the late 1990s, the government realized that it was having to recruit a high volume of doctors from other countries and so raised medical school enrollment by 50 percent. Over time, tensions arose as the increased number of students began to compete for the limited number of specialist training slots available. A poorly implemented attempt to fix the problem actually exacerbated it, leading to medical students demonstrating in the streets. Training spots for the students were eventually created. But when these doctors-in-training1 finish their programs, the National Health Service (NHS) could find itself facing a second problem: an excess of doctors in certain specialties. Because the NHS’s implicit social contract guarantees doctors employment for life, it has no easy way to eliminate the excess.

Supply/demand mismatches can also arise in countries that allow market forces to influence how many clinicians are trained, how and where those clinicians practice, and what they can earn.2 Both Japan and the United States, for example, are experiencing severe shortages of certain types of clinicians.

In Japan, the shortage of hospital-based specialists has become so acute that it regularly makes headlines. Newspaper reports have described women giving birth in taxis after being shuttled from hospital to hospital because no obstetricians were available. A root cause of the shortage is the fact that specialists earn markedly less than their colleagues in primary care do. In Japan, the government imposes heavy restrictions on hospital reimbursements, which limits the salaries paid to specialists, who are hospital employees. As a result, many specialists switch to primary care, where there are fewer restrictions and potential earnings are much higher.

Financial incentives have had the opposite effect in the United States. Medical education is largely self-funded in that country, and many new doctors graduate with a debt of $100,000 or more. It is therefore no wonder that many American doctors migrate to highly paid specialties and not to primary care, where the need is greater. The resulting imbalance in workforce supply has increased health care costs in at least two ways. First, when primary care doctors are unavailable, specialists must provide primary care services, but they charge a higher rate to do so. Second, in regions with a high number of specialists, the cost of care does not typically decrease; rather, utilization rates—and overall costs—rise. This type of supply-induced demand has also been seen in other countries. Thus, it seems clear that all health systems, regardless of whether they rely primarily on central planning or allow a greater role for market forces, could benefit by taking a more rigorous, proactive approach to managing their market for clinicians.

Why workforce planning is done poorly

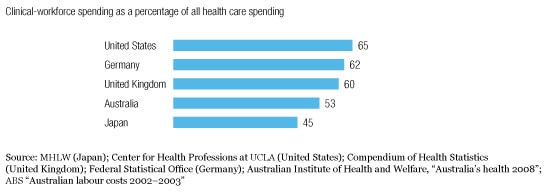

As financial pressures have mounted, most health systems have sought to control costs and optimize spending. Many of them, for example, carefully limit their pharmaceutical expenditures, and they evaluate their infrastructure investments and other capital expenditure costs closely. However, few systems have found a way to optimize their workforce spending, even though clinicians account for up to two-thirds of their budgets (Exhibit 1). A variety of factors helps explain why.

Workforce spending is high

First, the diversity of the workforce is daunting, given the numerous types of clinicians and the different settings in which they work. It is not sufficient for a health system to forecast the number of doctors it will need in ten years’ time; it must evaluate how many general practitioners (GPs) it will require, how many surgeons, and how many other specialists. Similarly, the health system must be able to predict the various types of nurses and allied health professionals it will require.

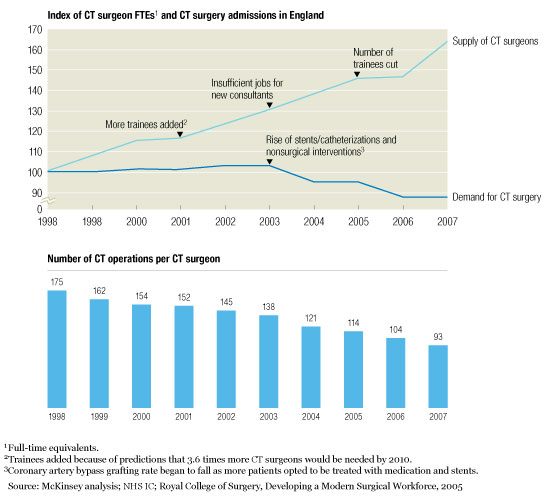

The length of clinical training compounds the difficulty. Pharmacists must go to school for 5 to 7 years. A doctor’s training often lasts 7 to 12 years or more, depending on the specialty. Yet advances in medical treatment can quickly alter workforce needs. In the past two decades, for example, the advent of stenting and other minimally invasive methods for opening blocked arteries has markedly reduced the demand for bypass surgery—and for the cardiothoracic (CT) surgeons who perform that procedure. The United Kingdom did not recognize this trend until too late, and it now has a significant oversupply of CT surgeons (Exhibit 2).

Supply/demand mismatch

Matching supply to demand is also difficult because of the number of stakeholders involved. Each group of clinicians has its own educational providers, professional societies, and often unions. Hospitals and payors are other key constituencies. Many of these stakeholders have a vested interest in maintaining the status quo and thus can make it hard for a health system to change.

Accurate forecasting

In our experience, most health systems focus primarily on the supply of clinicians; demand signals are often ignored. In recent years, however, a few health systems have made a conscious effort to deal with workforce issues more effectively. The best of them designate specific leaders as responsible for workforce strategies; these strategists use rigorous, evidence-based methods to forecast the future demand for health services and then to match the clinician supply to it. (For a look at one such system, see sidebar, “Developing a regional workforce strategy: A case study.”)

It is crucial that some of the workforce strategists be clinicians, especially doctors.3 Clinicians are in the best position to assess the impact of trends affecting supply and demand, especially the medical innovations that could produce disruptions. Furthermore, without clinician participation, it is doubtful that the necessary changes could be implemented to balance supply and demand—but strong, vocal clinician support for those changes can often help break the political logjams that hinder reform.

The workforce strategists begin by investigating their system’s current “market” for clinicians. As part of this process, they try to pinpoint where and why there is a mismatch between the system’s needs and the number, type, and quality of the available clinicians. In addition, they identify all key stakeholders who influence clinician supply and demand and then chart the interactions among them. Once the system’s primary current problems have been uncovered, the strategists can investigate ways to correct them and develop potential solutions with stakeholders.

Next, the strategists take an initial look forward to identify where supply/demand imbalances are most likely to emerge in the future. All too often, health systems allocate future training spots through a process that, in effect, simply multiplies current-year training volumes by a growth factor. This process usually does no more than magnify a system’s existing shortcomings. The strategists’ initial assessment provides a more useful base to work from; however, it will eventually have to be supplemented by detailed investigations into future supply and demand.

Demand should come first. To analyze future demand, the strategists must understand both how activity levels—the rate at which various services are offered—are likely to evolve and how the services will be delivered in the future. Activity levels are determined by demographics, changes in disease prevalence, medical innovations, policy and reimbursement shifts, and consumer expectations. The best available evidence from clinical studies and similar high-quality sources should be used to assess these factors.

Both the pattern and volume of demand for clinicians will depend on which clinicians are likely to offer the services in the future, where the services will be provided, and how productive the clinicians will be. For example, stenting has done more than decrease the need for CT surgeons. It has increased the need for interventional cardiologists, and by shortening the length of hospital stay, it has altered the need for inpatient nurses.

The strategists then investigate whether the right clinicians will be available to provide the needed services. In addition to determining how many of each type of clinician the health system currently has, they ascertain where the clinicians are in their careers (how close they are to retirement, for example, and how likely they are to switch to a part-time schedule). The strategists also calculate how many new clinicians are being trained and, if necessary, how many could be imported from abroad. In addition, they identify barriers that are limiting the clinician supply in certain settings (an unwillingness to work in rural areas, for example), as well as any other factors that are causing imbalances within the supply.

To test how well supply and demand will match, the strategists model a variety of scenarios for how health care delivery is likely to evolve. For example, to investigate how many GPs the health system will need in ten years’ time, they could compare the impact of achieving productivity gains among the GPs against allowing nurses to provide more primary care services. The scenarios enable them to spot the largest potential problems as well as some of the most promising solutions.

How far in the future supply and demand should be forecast and how specific the forecasts should be depend on which part of a health system the strategists are considering. A hospital needs to understand its near-term (two- to three-year) workforce requirements. A regional health authority needs a broader perspective on the trends affecting supply and demand, and thus its forecast should look six to eight years ahead. For the health system as a whole, the forecast should project even further. Only a long-range perspective will enable the system’s strategists to alter training pipelines, particularly for doctors, and to assess the full impact of changes in demographics, disease prevalence, and the other factors influencing demand.

Once the strategists have identified where the health system has or is likely to develop supply/demand imbalances, they can begin taking steps to correct the underlying problems. How this can best be done will depend, to a large extent, on the characteristics of the system, but in almost all cases it will require strong leadership to manage the clinical training pipeline as well as the clinician work-force proactively.

Dealing with uncertainty

Because forecasting is an inexact science, the strategists must make sure that the health system can respond quickly to unexpected supply and demand shocks. This requires that they assess workforce supply and demand regularly, rather than working through a strategic exercise once, and also that they establish “trigger” supply/demand ratios (early warning signals that problems are arising). Even more important, the strategists must ensure that the workforce has a high degree of flexibility. Greater flexibility does far more than simply make it easier for a health system to respond to shocks; it helps prevent such shocks from arising in the first place. But achieving the necessary level of flexibility entails risk, which must be allocated fairly and transparently among the key stakeholders.

Increasing flexibility

Although different models of clinical education and training are used in different countries, most of them lack flexibility. Students must often choose an area to specialize in fairly early, and they usually encounter significant obstacles if they want to change tracks. By enabling clinicians to specialize later in their careers, a health system can make it easier for them to respond to shifting service needs and increase its own ability to respond to demand disruptions.

Similarly, greater flexibility could be achieved by making it simpler for clinicians to change their area of specialization once they have entered practice. In some countries, for example, CT surgeons have responded to the decreased demand for bypass surgery by learning how to implant cardiac defibrillators (ICD/CRT4 devices). Health systems can increase the flexibility of their workforce by making it easier for all doctors to get similar types of cross-training.

Admittedly, it will be difficult for most health systems to introduce this type of flexibility. It can be done, however, as the ease with which Japanese specialists can transfer into primary care attests.

Workforce flexibility can also be improved by enhancing the skills and capabilities of health professionals who are not doctors. In many countries, the role of nurse practitioners and physician assistants has broadened considerably in recent years. These health professionals can now legally perform many of the same tasks that GPs do, and as their scope of practice expands, they may be able to compensate for the doctor shortage in other supply-constrained specialties. What is safe and appropriate for nurse practitioners and physician assistants to do will vary by specialty, of course. But because these health professionals can be trained much more rapidly than doctors can, they offer an especially attractive way to increase workforce flexibility.

Greater flexibility can also be achieved either by importing clinicians from abroad or by not guaranteeing employment to all locally trained clinicians. As Exhibit 3 shows, the available (albeit somewhat imperfect) statistics suggest that foreign-trained clinicians account for 20 percent or more of all doctors in many English-speaking countries. Importing clinicians is substantially more difficult, however, for countries with high language and cultural barriers (Japan, for example). Also, importation raises an ethical question: is it appropriate for wealthy countries to recruit clinicians from poorer countries, potentially leaving those countries with a clinician shortage? Nevertheless, importation is often the easiest way for a country to increase workforce flexibility.

Foreign-trained clinicians are common

Not guaranteeing employment may be quite difficult for health systems that have traditionally included such guarantees in their implicit social contracts. However, it can provide a way for such systems to avoid having an oversupply of clinicians and the problems that oversupply can entail, such as decreased productivity and increased costs.

Allocating risk

Most health systems lack an equitable way to allocate the risk associated with workforce supply/demand imbalances. Market-based systems assign much of the risk to the clinicians themselves; in the United States, for example, doctors in some oversupplied specialties may be unable to find jobs, yet they are still expected to pay off their school loans. (The doctors in underserved specialties usually benefit from higher salaries, though.) Many more centrally controlled systems tend to assign most of the oversupply risk to the government. Not only do the governments of these countries typically underwrite the cost of clinician training, but some of them guarantee and pay for subsequent employment. Historically, both types of systems have, in effect, transferred much of the risk of undersupply to developing nations by importing clinicians when shortages arise.

More equitable risk allocation requires that there be greater transparency into what the risk could be; otherwise, it will be impossible for stakeholders to respond to supply and demand signals. Thus, the forecasts that workforce strategists develop should be shared freely with medical schools and other educational institutions, with the hospitals that provide training slots, and with the clinicians-in-training themselves. All of these stakeholders should be expected to shoulder part of the risk. Students should have the right to know what their job prospects will be, and they should understand the consequences of selecting an oversupplied or undersupplied field. In addition, students should be offered incentives to align their decisions with the health system’s service strategy. For example, a health system that wants to deliver more care in community settings must provide clinicians with incentives and the training needed to work in those settings. Similarly, schools and hospitals should be given incentives (both positive and negative) to modulate their programs in response to supply and demand signals and to promote the service strategy.

Exactly how risk is allocated will vary from system to system. In no case, however, should all or most of the risks be borne by one side. For most health systems, clear policy decisions will have to be made about how to assign risk.

Workforce strategist’s role

No health system can establish good control over workforce supply and demand unless some of its senior leaders and key clinicians are held accountable for that task. Including key clinicians in this process helps build support for the recommended changes throughout the clinical workforce.

Many health systems designate specific people to be responsible for service planning, but few of them include workforce planning as a core competency for those people. However, best-practice health systems give their workforce strategists responsibility for monitoring the trends that affect supply and demand, assessing the impact that changes in those factors are having or will have on the health system, and driving forward the changes needed to keep those factors in balance. Let us be clear: this recommendation is not about central planning. Rather, it is about identifying where problems are likely to arise and then taking steps to remove the barriers that are stopping supply and demand from reaching equilibrium.

Ideally, a health system should have workforce strategists at the national, regional, and local levels so that service planning and workforce planning are always done in tandem. In a health system that does most service planning at the national level, primary responsibility for the workforce strategy also belongs at the national level. If the system takes more of a regional approach, then regional workforce strategists should play a greater role.

In all health systems, however, certain responsibilities for workforce planning should reflect the geographic differences in clinical labor markets. For example, medical school markets are typically national (students apply to universities across a country), whereas the markets for doctors-in-training may be regional (students often apply for training slots in specific geographic areas). Most nursing markets are also regional. Thus, national workforce strategists are in the best position to influence the inflow of medical students, but regional strategists can play an important role in influencing the number and type of training slots for doctors and nurses.

In both cases, the strategists will not have full authority over the schools and training programs, but they should be able to work closely with and have a strong influence over those institutions. And because of their need to influence training pipelines, the strategists should have strong input into, if not out-right responsibility for, how the funds for clinical training are allocated.5 The funds should never be given as block grants; rather, they should be used only to underwrite costs for the appropriate types and number of clinicians, and only to support educational programs that offer an acceptable level of quality.

In addition, the workforce strategists must be able to influence the activities of other key stakeholders, including payors, providers, accreditation organizations, other regulators, professional societies, and unions. Often, these stakeholders have competing interests, but they all should be aligned on the ultimate goal: making sure that the clinician workforce supports the health system’s service strategy.

For many health systems, the recommendations we have made will require significant change. How health systems choose to implement the recommendations will vary considerably from system to system, but change is necessary if the ambitious plans for health care delivery that so many systems have made are to be supported by creditable, realistic workforce strategies. If the health systems fail to make the necessary changes, patient care and clinician morale will suffer, and two-thirds of all health care costs will remain difficult to control.