Four experts show how AI solves real-world problems

Through deep learning techniques, McKinsey experts identified a feature – ink blots – that can be used to detect fraudulent signatures.

What better place to learn about the newest advances in artificial intelligence (AI) than at a place once called the ‘Tomorrow Lab’?

McKinsey recently hosted an educational AI forum for press and colleagues in our Waltham, Massachusetts, office, which coincidentally started out in 1998 as a ‘Tomorrow Lab’.

Following an initial stint as an accelerator for internet start-ups in the late 90s, the Waltham office became one of McKinsey’s major hubs for research and analytics. Here, some 350 data scientists, mathematicians, and developers (many of whom are doctors in their fields) are working across more than 40 different areas of expertise at any one time.

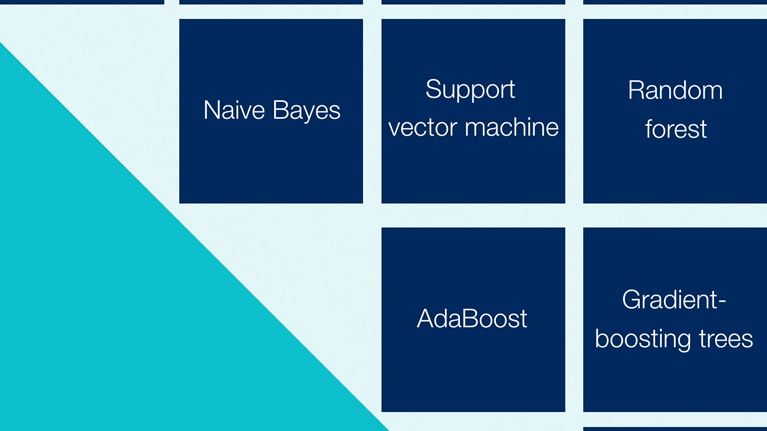

At the forum, the talk was of data lakes, random forests, and “boiling the ocean.” Nature metaphors aside, it was a chance to experience our newest techniques for analyzing and modeling data to help our clients solve some of their most intractable problems.

“The thinking in AI has changed from ‘What’s possible?’ to ‘How do I do this?’” explains Rafiq Ajani, the partner who leads the North American analytics group. “Quickly selecting the right algorithms and models from the many open-source options—and precisely customizing them for the use case—is where we are now. It’s not about ‘What’s the right answer?’ but ‘Am I asking the right questions?’—with more investment on the data side.”

The general session was followed by a whirlwind tour of demos from our experts.

Up first was Luke Gerdes, who joined McKinsey two years ago, after working for years as a civilian researcher and professor at West Point, developing analytics to help counter terrorist networks and insurgents.

Today, he focuses on natural language processing, a branch of artificial intelligence that uses analytics to derive insights from unstructured text—that is, text that is not organized into a structured table but appears in written documents.

These could be legal contracts, consumer complaints, social media—and, in one example, conversation logs between pilots and towers.

“Almost 90 percent of all data is unstructured,” he explains. “We are just at the tip of the iceberg in developing potential applications.” What’s different now is that there are application programming interfaces (APIs), or new ways to access a vast variety of data across applications, and tools have advanced to allow more nuanced, granular, and accurate analysis of text.

Luke recounts helping a company that had come through multiple acquisitions and inherited numerous sets of product data. They were using the same part number for vastly different parts and buying the same materials for different prices from different suppliers. “Their goal was simple: pay the lowest price point for the same part. We helped them rationalize their product portfolio by applying machine learning to analyze textual product descriptions—it wouldn’t have worked with SKUs or bar codes,” he said.

In another example, a Southern US state was able to slice and dice reams of student data in a myriad of ways to tease out the socioeconomic factors that influenced test scores. They then could advise their school districts on the qualitative factors they had control over, such as teacher-student ratio, that could improve performance.

Following next was Jack Zhang, who has been with the analytics group from its humble beginnings of Excel sheets and traditional statistical models. Today, he leads our work on AI-enabled feature discovery (AFD), which he describes as “boiling the ocean” to find the insights.

AFD is about testing every possible variation on a set of information to understand outcomes, such as why customers cancel a service or patients make certain choices.

“It’s a statistical-modeling concept that’s been around for years—but automation has changed everything,” he says. We can test every possible variation of immense data sets—hundreds of millions of variations—in a fraction of the time, and it highlights pockets of features we can dive into for new insights.”

He walked through an AFD model designed for a wireless company that was struggling with a stubborn 20 percent annual customer-churn rate. The team helped them construct an airtight 360-degree view of millions of customers and then ran the algorithm—more than 300 features emerged that signaled when a customer was about to cancel their contract, such as moving into a specific zip code. The company could address most of them with three overarching preemptive measures, representing $100 million in possible incremental revenue. The model is being adapted across more than ten industries including media, banking, and pharmaceuticals.

Adrija Roy, a geospatial expert, demoed our OMNI solution. It combines geospatial data (transit hubs, foot traffic, demographics) with customer psychographics (shopping history) and machine-learning techniques. Businesses are using OMNI to understand the economic value of each of their locations in the context of all of their channels. It’s guiding decisions on optimizing location networks, including opening and closing outlets and designing individual store experiences.

The final demo was about deep learning, presented by Vishnu Kamalnath, an electrical engineer and computer scientist, who did early work training humanoid robots.

In deep learning (DL), algorithms ingest huge sets of unstructured data, including text, audio, and video, and process them through multiple layers of neural networks, often producing insights humans or less complex models could not grasp. DL algorithms can detect underlying emotion in audio text, are used in facial recognition, and can track small, fast-moving objects in satellite imagery, such as fake license plates in traffic.

In a recent example, working with a bank to reduce fraud, the team developed an algorithm that surfaced and analyzed a key feature of fraudulent signatures—tiny blots created when a nervous, often inexperienced perpetrator faked a signature. They used the algorithm to test all of the existing signatures on record, garnering a 97 percent rate of accuracy in detecting fraudulent ones.

“People used to think of deep learning as a really expensive proposition, requiring complex hardware,” says Vishnu, “But the cost is dropping; tools are becoming commoditized. Don’t shy away from it as an esoteric solution.” Deep-learning applications are estimated to be $3.5 trillion to $5.8 trillion in value annually across 19 industries.

While technologies are advancing at a healthy pace, one challenge remains unchanged: getting right kind of data for the right use case at the right time. “The data component takes 80 percent of the time to gather, clean, and run in any project,” says Jack Zhang. “We still live by the maxim ‘garbage in, garbage out’.”