Pooling quality-related data into a "lake" yields new quality breakthroughs—thanks to innovative analytic techniques.

Most companies already make use of an array of well-known methodologies to keep quality under control. Those approaches, which include the application of lean-management techniques and Six Sigma tools, have been instrumental in significantly reducing quality deviations. Yet difficult-to-diagnose quality issues still drive up product costs and put reputations at risk.

Today's heavily instrumented, highly automated production environments present both an opportunity and challenge for quality teams. The opportunity is provided by the sheer size of the "data lake", which may have grown by orders of magnitude over recent years. The challenge comes in knowing how to make use of all that data. Teams may lack a full picture of the data available to them, or an understanding of its relevance to quality outcomes.

That's where the machines come in. Advanced analytics approaches are transforming the way companies do many things, from retail operations to procurement, process yield improvement, and maintenance planning. Success in these areas has fueled interest in what analytics can do in others—and has spurred growth in the number of off-the-shelf applications and tools.

But on their own, even the smartest analytical tools aren't enough to produce meaningful quality improvement. That requires the right industry knowledge and careful change management as well. In this article, we'll look at how one company in pharmaceutical sector applied such a combined approach to its own quality challenges. The results were impressive. The site, which already performed strongly in comparative benchmarks, identified the likely root causes of 75 to 90 percent of its remaining process-related quality deviations, and revealed opportunities to improve overall process stability and control.

Analyzing the data

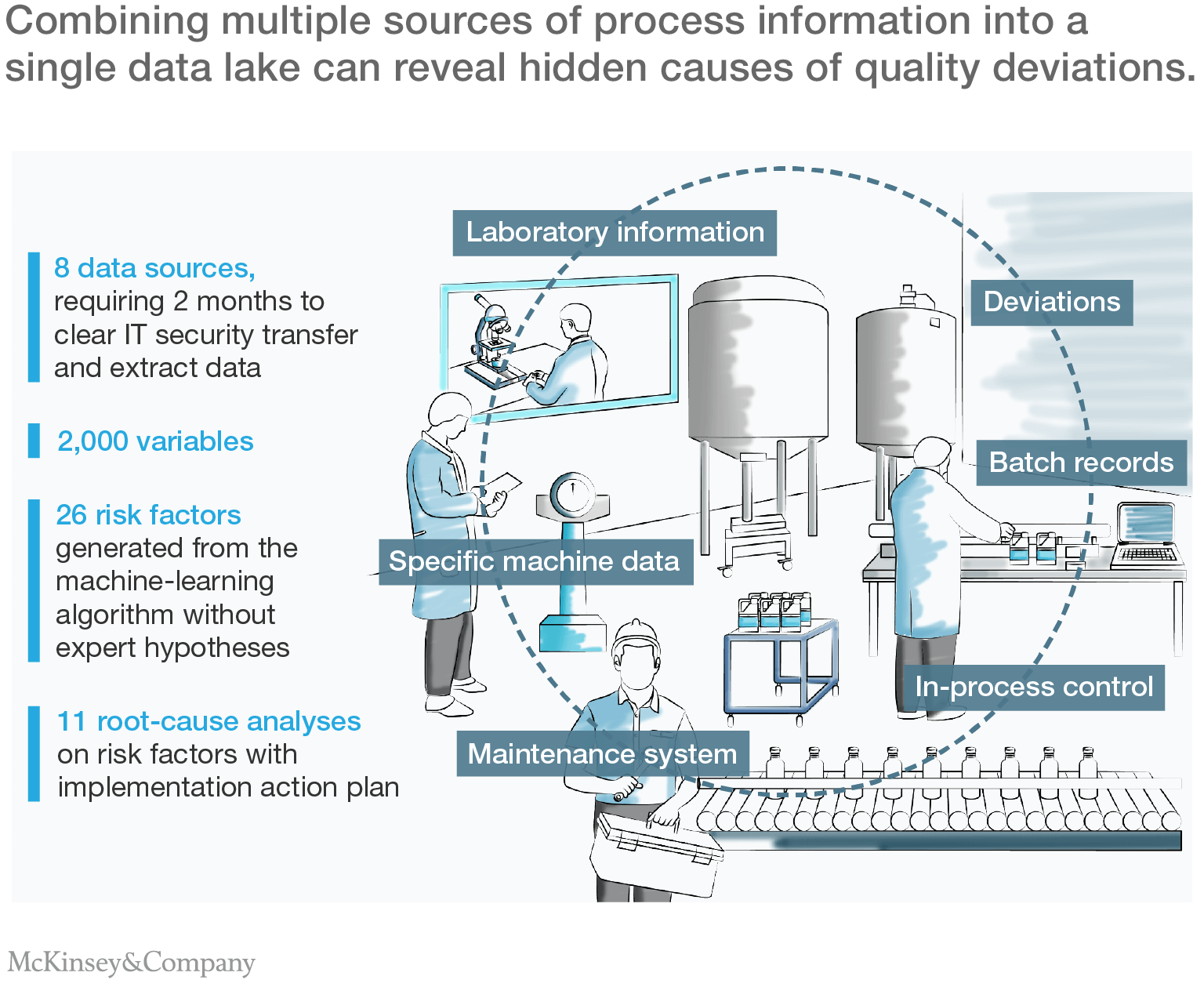

The first step in the company's advanced-analytics effort was to capture, store, structure, and clean its data. Like all modern pharmaceutical operations, the manufacturing environment was data-rich. Production machinery was highly automated and heavily instrumented. Intermediate and finished products were regularly sampled and analyzed in its on-site quality assurance laboratory. And production staff kept meticulous records.

Bringing all that data together into a form suitable for automated analysis required significant effort, however. The site stored its data in eight separate databases, using a variety of structured and unstructured formats, running on different computer systems. The objective was to combine data from more than two and a half years of production into a single data lake containing more than 2,000 parameters (Exhibit 1). Achieving it required close cooperation with the company's IT and compliance functions—especially to uphold corporate data-security and regulatory standards.

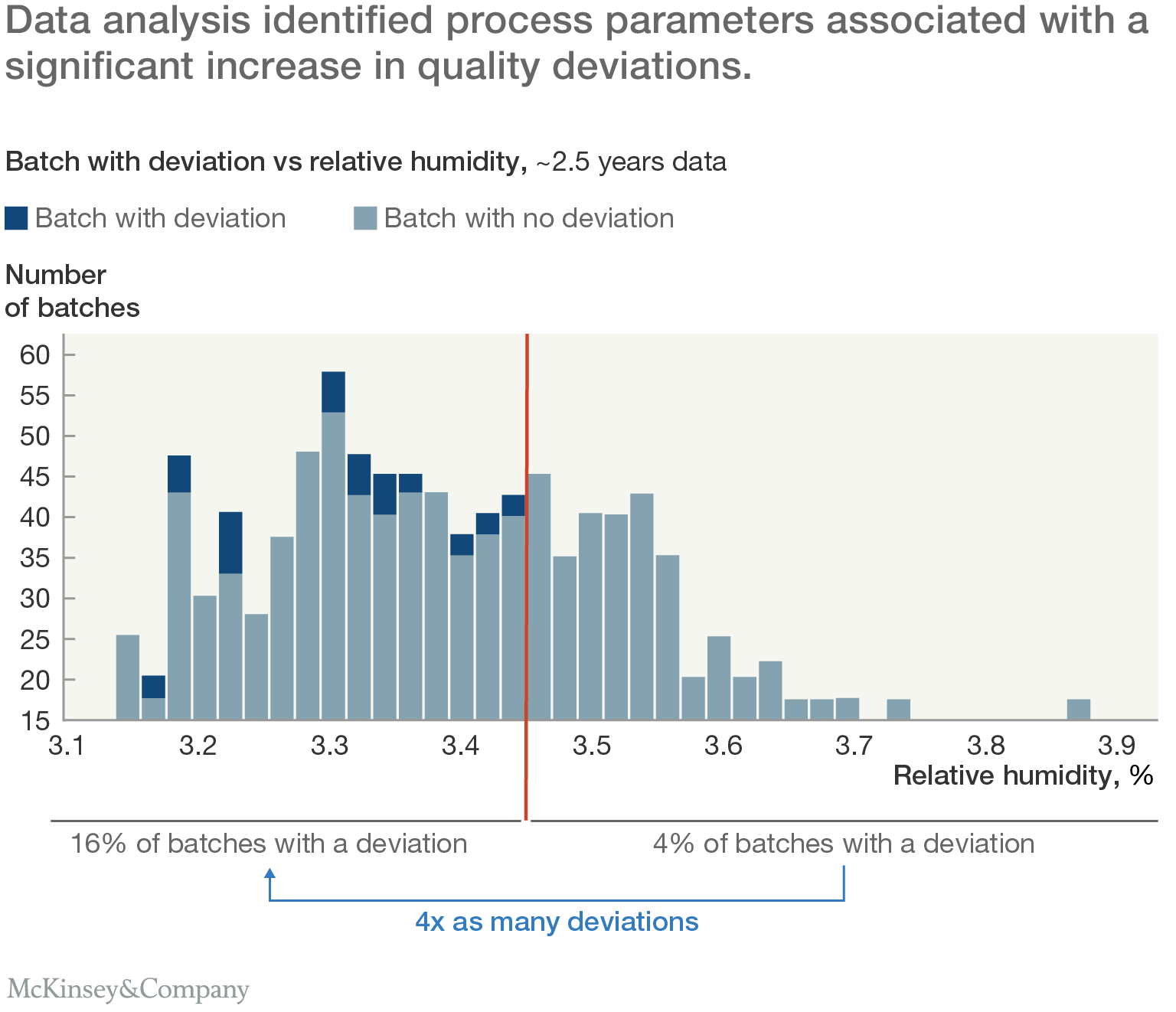

The next challenge was to identify relationships between process parameters and production deviations. A custom-coded decision-tree model iteratively searched the data lake to find factors associated with an increased probability of quality deviations. To identify the most important relationships, the team looked for specific risk factors, especially process parameter thresholds at which deviations were at least twice as likely to occur (Exhibit 2).

Applying human insight to identify root causes

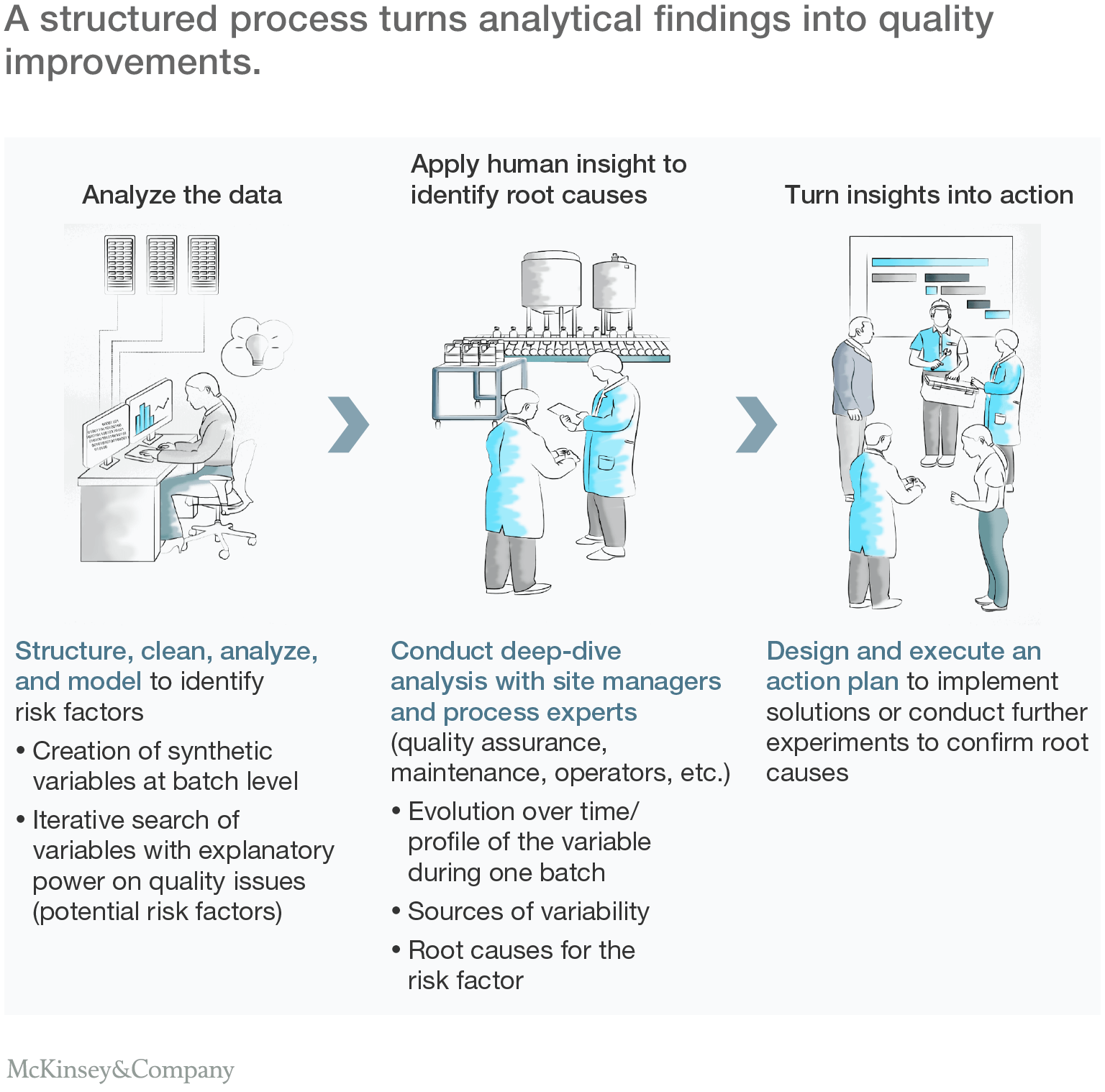

The risk-factor analysis identified more than two dozen points of potential interest. But since correlation does not necessarily mean causation the company had its manufacturing-process specialists review the results. They helped weed out meaningless relationships, such as downstream process parameters associated with upstream deviations to identify those worthy of further exploration.

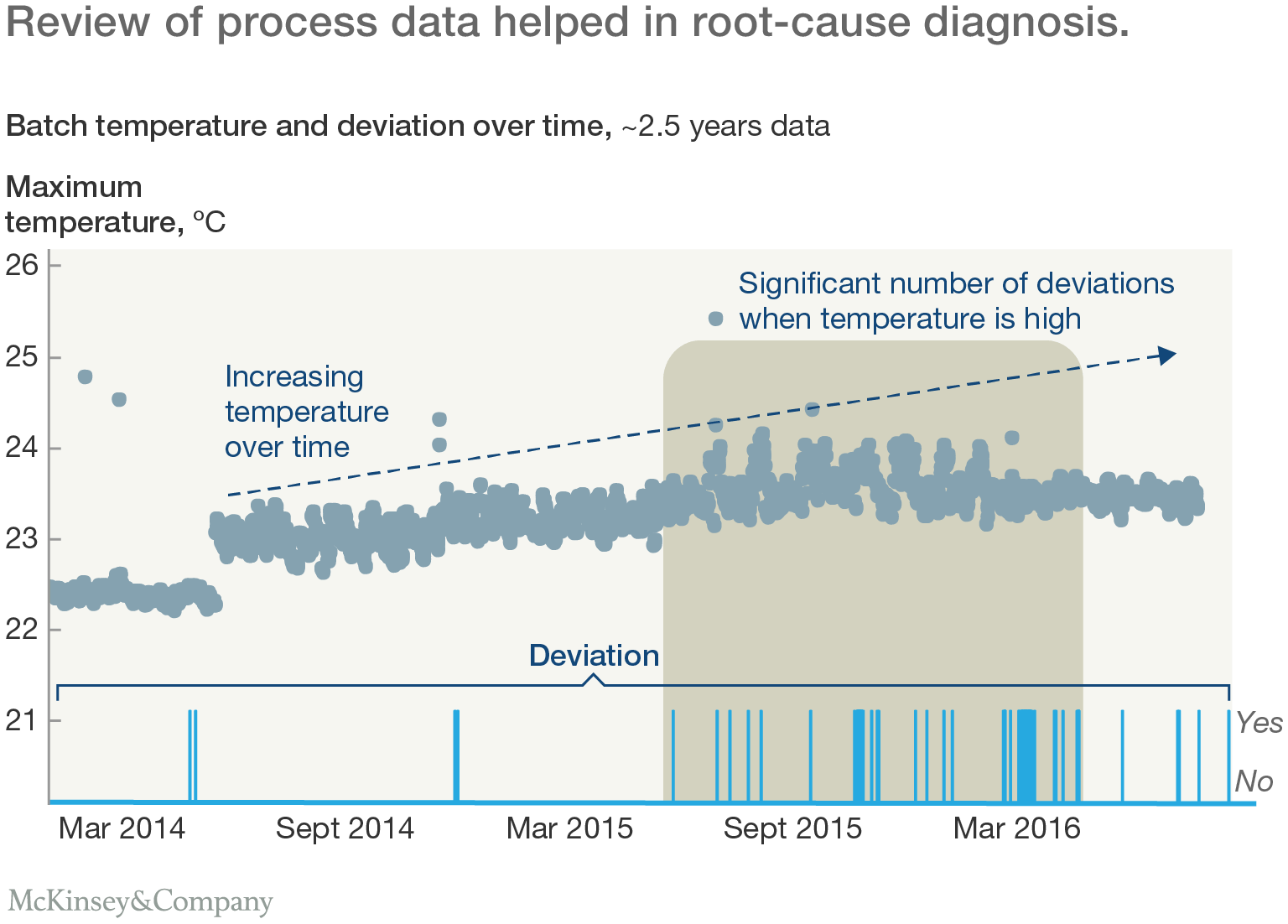

The company then conducted deep-dives into specific areas. Some of this work took place in front of the analysis screen: looking in detail at the evolution of specific parameters over time, along with the associated incidence of quality deviations, for example. A lot of it happened on the shop floor. The teams studied the way processes were actually run and managed. They looked at factors that could potentially influence variability in process parameters, using well-established lean and root-cause analysis tools, such as the 5-whys approach.

That detailed, hands-on work revealed numerous opportunities for improvement. For example, one key process involved an exothermic reaction, with a water cooling system to remove excess heat from the reaction chamber. The analysis showed that higher temperatures during the reaction were associated with a greater number of deviations. Operators had assumed that the cooling system would hold the reaction temperature within an acceptable range, but the time series data showed that the maximum temperature was not-well controlled. It had been allowed to rise gradually over a number months, leading to more deviations (Exhibit 3).

In response, the team proposed a two-stage action plan. First, the cooling system's target temperature would be reduced. Second, if the maximum reaction temperature were still too high, the pure water used in the cooling system would be replaced with a lower-temperature solution containing anti-freeze.

In another part of the plant, analysis showed that variation in the density of a tablet-coating solution was associated with coating-quality problems. Batches with high peaks in density, which indicated poor mixing of coating ingredients, were more than two-and-a-half times as likely to suffer deviations. On the shop floor, the team found that the coating material was manually prepared, with little standardization in either the homogenization process or the waiting time prior to coating. The solution was the introduction of a new standard operating procedure for the mixing process.

Sometimes, relationships identified by the analytics system led to the discovery of root causes elsewhere. In one process step, material was compacted between two roller dies. The analysis showed a high probability of deviations when the air gap between the dies was at the high end of its normal range. That finding surprised the production team. The air gap was measured by the machine, but its setting was never altered. The team concluded that the changes were caused by peaks in pressure from the feed material, which was distorting the dies. In response, it adjusted the feed system's control parameters to stabilize the incoming flow.

Turning insights into action

The combination of data analysis, input from process experts, and shop-floor problem solving gave the company a list of actions to address potential sources of quality deviations. This list was prioritized by cost, difficulty, and potential impact to create an action plan that was rolled out over the ensuing months. Significantly, most actions involved changes to process settings or operating procedures, allowing them to be implemented quickly and with little additional capital investment (Exhibit 4).

Three pillars of successful analytics

While the approach used in this example was tailored to the specific needs of one company and its manufacturing processes, it demonstrates the three basic elements that must be addressed by any organization seeking to undertake a digital quality transformation:

- A systematic upfront assessment of the available data and a bespoke approach. Before the analysis begins, companies need to identify all the relevant sources of data, and they need to collect and organize that data in a coherent way.Since processes and datasets vary hugely between organizations, they may also need to develop a tailored approach to the analysis of their data. It's no coincidence that many of the most successful users of these techniques—including large e-commerce companies and other internet-based businesses—have access to considerable in-house expertise in coding and data analytics.

- The right people, including IT specialists, data analysts, process experts and change leaders. Effective analytics requires a wide range of skills, which means bringing together people with a range of profiles: IT specialists to extract and explain the data available in the organization; data scientists to build and run analytical models; site managers, process engineers and quality staff to interpret the results and find root causes; and change leaders to coordinate the project and help with problem solving.

- A structured action plan to translate analytical insights into shop-floor actions. Once the analysis is complete, companies must fix the quality problems they have found. That requires a deep understanding of processes and production equipment, and a program to manage change on the shop floor. Is the existing production equipment capable of holding the reaction temperature within the required range? What modifications to operating procedures, control parameters or machine specifications might be required to do this?

* * *

Could your quality teams make use of the same approach? Finding out might be more straightforward than you think. Your production sites are probably already generating a large quantity of data, even if it is not currently used by quality teams. To evaluate the potential of advanced analytics, pick a product and try the method out. High-volume products are usually the best initial target, as they generate the most data, offer a bigger payback, and many quality issues are product specific. Most companies find that the benefits spread quickly, however, as they apply what they have learned about one product to others that use similar processes.

This article has focused on the efforts of one good performing pharmaceutical company to address longstanding and difficult quality challenges. But advanced analytics can apply to a wide range of quality challenges at all kinds of manufacturing sites. It can give sites a faster way to get their basic quality under control, or help to eliminate the remaining unsolved quality issues at high-performing facilities

About the authors: Jeremie Ghandour is a consultant in McKinsey's Lyon office, where Jean-Baptiste Pelletier is a partner; Gloria Macias-Lizaso Miranda is a partner in the Madrid office; and Julie Rose is an associate partner in the Paris office.